Quality in Software Engineering: It's Not What You Think - Part 8: Ignoring the Boat

I specialize in developing object-oriented java applications that aligns with business objectives, using Domain-Driven Design principles to ensure technical decisions drive tangible value. By focussing on a deep understanding of the business domain, I craft solutions that solve real problems while maximizing ROI. My approach evaluates the cost/profit ratio of every decision—only implementing technologies when the benefits outweigh the costs. I’ve been called in to revive stalled projects and address challenges where others have struggled. My focus is on creating software that not only meets but exceeds business expectations. Whether working with legacy systems or modern frameworks, I select the right technologies to maximize value—not just follow trends. I believe software should be a strategic asset, and this mindset guides every decision I make in development.

In this series, we've used the Japanese vs. Dutch boat analogy to explore development speed as quality's heartbeat—the amount of valuable features delivered per developer per unit of time, sustained or improved. We've covered the science-engineering cycle, coping vs. improving, resistance to change, enterprise pitfalls, and even a fraud detection challenge where OO proved its edge. But today, I want to flip the script: quality isn't what most teams think it is. To prove it, let’s return to the boat—why do we ignore its setup and focus only on whether it reaches the finish?

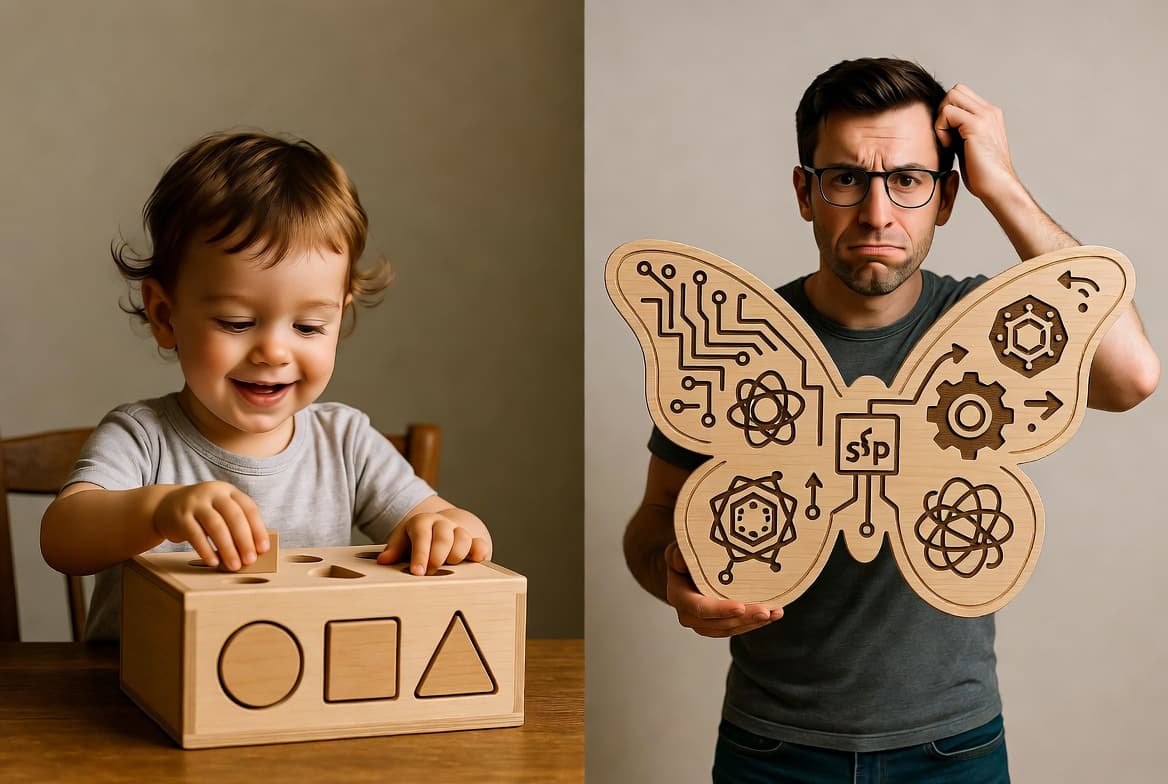

The Joke and Its Point

Recall the classic boat joke: the Japanese boat, lean with eight rowers and one coxswain, glides to victory. The other team adds managers, consultants, gadgets, and processes—complicating without improving. Everyone laughs because the inefficiency is obvious. But in software, it’s usually a one-boat race. There’s no direct competitor rowing beside us, so inefficiency is harder to see. We act as if there’s no such thing as boat design. We only care if the boat reaches the finish. Testing, code reviews, and KPIs all measure the outcome, not the setup. That is the sore spot: quality checks stop at “does it work?” not “how well is it designed to keep working?”

Why Tests Miss the Point

Testing—unit, integration, end-to-end—verifies behavior: does the app process an order correctly? Does fraud detection flag suspicious transactions? It’s essential, but limited. It only confirms the boat can float and reach the line. It doesn’t assess whether the boat is stable, scalable, or efficient. In the fraud detection example from Part 6, tests might confirm Scenario.evaluate(events) flags fraud. But they won’t ask: should data gathering belong in Pattern? Does the design allow adaptation when fraud tactics change? Without those questions, we end up coping with symptoms (bugs, debt) rather than improving the structure.

Why Reviews Don’t Fix It

Merge requests and code reviews often amplify this limitation. They frequently fixate on syntax: “Use var,” “Rename this variable.” Rarely do they address design. And when they try, it’s already too late—post-implementation, the code is concrete, the cost of change high. Design conversations belong upfront, when a model is still malleable. A team modelling Scenario with domain experts will catch misalignments early. By contrast, a late-stage review mostly rubber-stamps code and enforces style, missing the real quality question: is this the right boat?

Automated tools like SonarQube reinforce this focus. They enforce style rules and detect surface-level issues, but they don’t touch design coherence. It’s another layer of process that celebrates compliance without questioning structure—a shiny gadget bolted onto the wrong boat.

Why Learning Stalls

If teams only ever row in the boats where the sole goal is crossing the finish line, their learning becomes constrained. They get better at the process—writing unit tests, passing Sonar checks, filling in MR templates—but never build intuition about boat design itself. Quality is measured narrowly: “does it reach the finish?” As a result, teams keep rowing harder, guided by metrics that reward coping rather than improving. Over time, this creates a cycle where boat quality is never examined, and inefficiency silently compounds.

Why Explicit Models Change Everything

This is where object-oriented modelling changes the game. An explicit domain model makes the boat visible. Instead of scattered logic in pipelines and services, you have tangible objects: Order, Scenario, Pattern. Suddenly, design becomes discussable: should applyDiscount() belong in Order or Billing? Is Scenario responsible for evaluation or orchestration? These are design questions—the equivalent of asking about hull shape and oar placement—questions that shape efficiency and sustainability.

With explicit models, quality shifts from “does it work?” to “is it well-structured to keep working?” That’s the Japanese boat mindset: not just finishing the race, but doing so with rhythm, clarity, and less wasted effort.

Team Size and Common Vision

Ignoring the boat doesn’t just impact code—it shapes teams. In procedural or functional styles, as applications grow, logic fragments across functions, pipelines, and services. No single person holds the whole picture. To cope, organizations add more people. But larger teams mean silos, handovers, and coordination overhead. The shared vision dilutes, delivery slows, and the boat grows heavier with every extra rower and manager.

OO turns this dynamic upside down. Because the domain model is explicit, it is the shared vision. Everyone—developers, testers, analysts, even business experts—works from the same conceptual map. With the model as reference, small teams don’t just manage—they excel.

In fact, experience shows that 2-3 developers is not only sufficient but often ideal. Even with systems of hundreds of domain entities, a 3-dev team can maintain stability, speed, and low defect rates. The reason is simple: the complexity is absorbed by the model, not by coordination overhead. Adding more people doesn’t add more speed—it only erodes the common vision.

That’s why OO and small teams fit naturally together. You don’t need 10 or 20 people if the model is sound. You need a few focused minds aligned on the same boat.

The Real Definition of Quality

So what is software quality? It’s not just “working code” or “tests passing.” True quality is sustained development speed—valuable features delivered consistently without exponential cost. That requires focusing on the boat itself: its design, its efficiency, and whether it continues to fit the domain it serves.

As long as we ignore the boat, we’ll row harder, add more rowers, enforce more rules, and still fall behind. Once we put the boat itself—the domain model—at the center, we finally unlock the efficiency we keep chasing with processes and tools.