Why Development Speed Defines Software Quality - Part 1: Lessons from a Rowing Team

I specialize in developing object-oriented java applications that aligns with business objectives, using Domain-Driven Design principles to ensure technical decisions drive tangible value. By focussing on a deep understanding of the business domain, I craft solutions that solve real problems while maximizing ROI. My approach evaluates the cost/profit ratio of every decision—only implementing technologies when the benefits outweigh the costs. I’ve been called in to revive stalled projects and address challenges where others have struggled. My focus is on creating software that not only meets but exceeds business expectations. Whether working with legacy systems or modern frameworks, I select the right technologies to maximize value—not just follow trends. I believe software should be a strategic asset, and this mindset guides every decision I make in development.

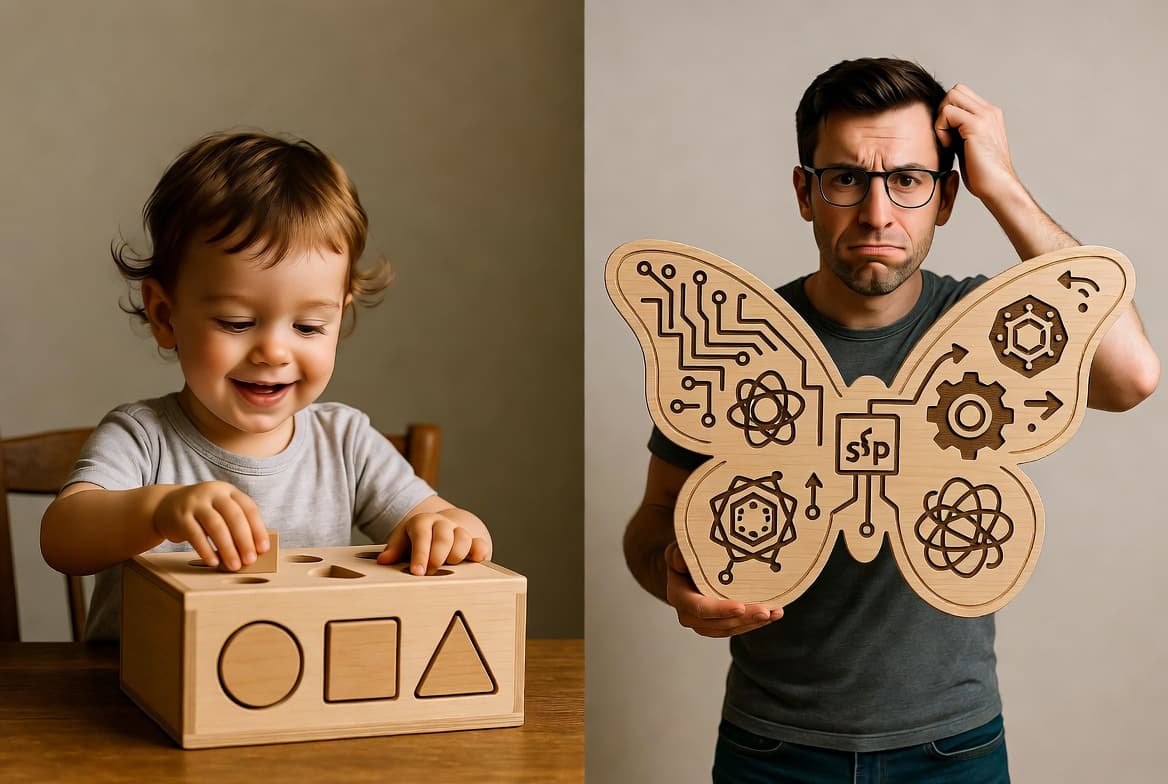

You’ve likely heard the management joke about the Japanese and Dutch rowing teams. The Japanese team, lean and synchronized, races with eight rowers and one coxswain, gliding to victory. The Dutch team? Two rowers, a manager, an agile coach, and a few middle managers — so yes, the outcome is as expected. The Dutch team loses. The management in the Dutch boat then hire consultants to “fix” the team, and decide the rowers need a strength coach. Next year, they lose again (by a smaller margin, so management celebrates). They then fire the rowers, hire a recruitment firm to find “stronger” ones, and the cycle repeats. Sound familiar?

This joke mirrors a core challenge in software development. Every IT project is in essence unique. Only one of it's kind usually exists within an organisation; a single boat in a one-team race. If it reaches the finish line (meets requirements), it’s called a success. But how do you know if your team is the efficient Japanese boat or the bloated Dutch one? With no other boats to compare, measuring quality, effectiveness, or efficiency is tough.

The answer does not lie in 90%+ unittest coverage, sonarqube checks and magic pipelines. Somehow in software development knowledge and application of tools seem to be the core skill, but try explaining to a carpenter that their tools matter more than knowing how to build a house! There are still too many applications that need complete overhauls, grand framework updates or software architecture resets as the years pass. And of course there are always silver bullets that are promised to miraculously prevent all problems next time around (like magic frameworks or exploding micro-services).

In my opinion the key indicator lies in monitoring the development speed: the amount of valuable features delivered per developer per time unit, and see if the speed is sustained or in the best scenario's where it improves over time. Speed is the pulse of a project’s health. A team that consistently delivers features, sprint after sprint, likely understands the business deeply and keeps code clean. A slowdown signals issues; technical debt, misaligned requirements, or overcomplicated processes and code. There is a point when the speed drops so low, that a greenfield rebuild is the only option. But without the correct problem identification, the results will not be different - like the Dutch team's boat.

Speed isn’t about rushing; it’s about sustainable delivery, driven by:

A - Understanding the business domain (the “what”) through a scientific approach, like analyzing the team’s rowing rhythm.

B - Implementing as simply as possible (the “how”) with clear, readable code, like synchronized rowers without excess baggage.

In the follow-up articles, I’ll explore these drivers, and show that A and B are part of a continuous cycle between these two.